Qwen introduction (partial copy below)

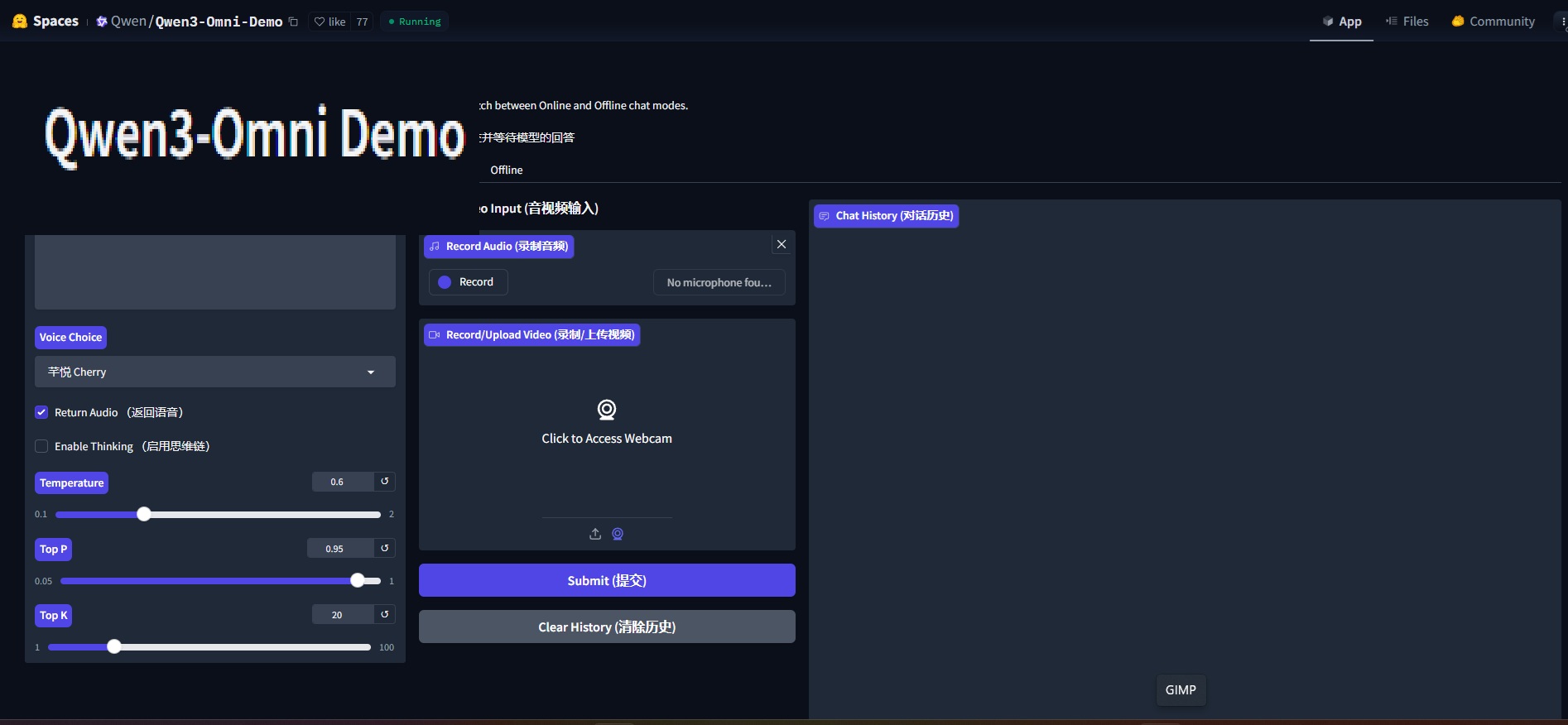

Qwen3-Omni is the natively end-to-end multilingual omni model. It processes text, images, audio, and video, and delivers real-time streaming responses in both text and natural speech. We introduce several upgrades to improve performance and efficiency.

Key Features:

- Natively Omni-Modal Pretraining: Qwen3-Omni is a natively end-to-end multilingual omni model, without performance degradation compared to the single modality models.

- Powerful Performance: Qwen3-Omni achieves SOTA on 32 benchmarks and overall SOTA on 22 across 36 audio and audio-visual benchmarks, outperforming strong closed-source models such as Gemini-2.5-Pro, Seed-ASR, and GPT-4o-Transcribe.

- Multilingual Support: Qwen3-Omni supports text interaction in 119 languages, speech

understanding in 19 languages, and speech generation in 10 languages. - Faster Response: Qwen3-Omni achieves a latency as low as 211ms in audio-only scenarios and a latency as low as 507ms in audio–video scenarios.

- Longer Understanding: Qwen3-Omni supports audio understanding of up to 30 minutes.

- Personalized Customization: Qwen3-Omni can be freely adapted via system prompts to modify response styles, personas, and behavioral attributes.

- Tool Calling: Qwen3-Omni supports function call, enabling seamless integration with external tools and services.

- Open-Source Universal Audio Captioner: Qwen3-Omni-30B-A3B-Captioner, a low-hallucination yet highly detailed universal audio caption model, fills the gap in the open-source community.